Article

5 min read

Recruitment Bias Isn’t a People Problem: It’s a Process Problem

AI

Author

Alan Price

Last Update

April 01, 2026

About the author

Alan Price serves as the Director of Talent Acquisition at Deel, overseeing talent acquisition teams in the US, LATAM, EMEA, and APAC regions. Before joining Deel, Alan was a founding member of the micro-mobility company Dott, where he held the position of Vice President of People. Prior to his role at Dott, he held senior positions at Uber and Google.

There are 188 types of unconscious bias that could infiltrate your recruitment process. Some types of bias will be familiar, for example, confirmation bias encourages hirers to look for information that supports their existing views, while affinity bias is the tendency to gravitate toward candidates with similar backgrounds or interests to us.

But while bias in recruitment feels unfair and frustrating, it’s rarely rooted in malice. Instead, bias is a natural cognitive shortcut — something humans do to sort through problems and make judgments. It’s fast and efficient, but sometimes we get it wrong, even when we have the best intentions.

That's why structured hiring is so important. I truly believe that recruitment bias boils down to the quality of the processes and tools we use — and when every decision flows through a fair and consistent system, there’s less room for inconsistencies to sneak in.

Bad hiring processes are bias magnets

Traditional hiring practices are riddled with unconscious bias. We’ve all seen or heard of people who’ve landed a great role because of who they know, or who their dad went to college with. Other candidates applying for the same role would have missed out because of the lack of structure in the hiring process. The successful candidate was able to jump the queue or have extra attention paid to their application.

Even when favoritism isn’t blatant, there are still plenty of opportunities for hiring bias to rear its ugly head, especially when you’re scaling your hiring process. When every hiring decision is based on who's in the room that day, your hiring “process” is really just a series of individual opinions rather than a uniform approach to recruitment.

Most of the bias I see in recruitment is due to a lack of structure rather than deliberate. It happens because different interviewers ask a variety of questions and apply their own standards to different candidates. Instead of using a shared rubric to score each candidate against, they put their own individual stamp on the hiring process, backed by their own unconscious bias. Human energy levels play their part too. The person who applied on day one gets fresh eyes and a full quota of enthusiasm, while the person whose application is reviewed in under 10 seconds on a Friday afternoon just isn’t taken as seriously.

Fragmented tooling adds to the lack of consistency. When interview notes live in Slack, scores sit in a spreadsheet, and feedback exists only in someone's head, there's no audit trail and any hint of accountability vanishes. You can't spot bias if you don’t have access to the full picture.

Bias can impact any size or type of organization, but it often hits small to medium-sized businesses (SMBs) the hardest. Lean teams need to make high-stakes hiring decisions fast, often without dedicated HR support. But every hire matters more when your team is small, which makes the absence of structure even more costly.

Talent

Fair hiring depends on structure

Structured hiring is the antidote to bias and an integral part of your recruitment strategy. It relies on a repeatable, documented process where you apply the same criteria to every candidate. For example, using standardized questions lets candidates respond to the same set of prompts. Meanwhile, hirers use structured interview scorecards to rate candidates based on the same competencies. Early-round knock-out questions are also useful for shortlisting candidates instead of relying on gut feel.

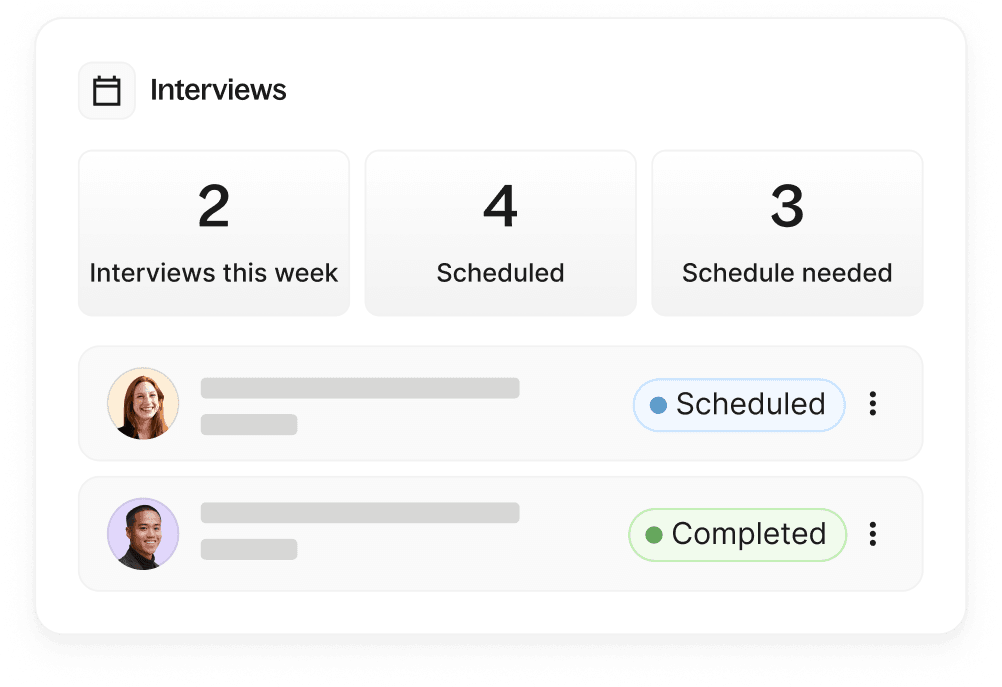

The right applicant tracking system (ATS) is what holds all of this together, giving your whole hiring team a single, shared view of every candidate and every decision.

When you can see the whole pipeline in one place, it’s easier to see patterns: who's dropping out, at what stage, and why. This visibility is the start of developing real accountability, because you can't fix what you can't measure, and you can't measure what you can't see.

How AI can (and can’t) support hiring bias reduction

Artificial intelligence fits structured hiring processes like a glove. It provides the means to scale hiring consistently without relying solely on human judgment, which, of course, is fallible by design.

Recently, 102,000 people simultaneously applied for open roles at Deel across many different positions. When I posted about using AI-assisted screening to review them, it sparked a debate about the fairness of using AI in talent acquisition. But the question I kept coming back to was: fair compared to what? Because let’s face it, humans don’t have a great reputation for making fair decisions either; we've just been doing it long enough that the unfairness feels normal.

AI in hiring isn't a perfect solution; it carries its own risks, and I won't pretend otherwise. But what it does well is applying your criteria consistently, at scale. AI-assisted CV-to-job matching works from the information candidates submit. It’s based on the requirements your team defines and applies those criteria to every application in the same way, without the fatigue or inconsistency that creeps into manual screening at volume. Crucially, it’s your human team that decides who moves forward, and this decision-making should never be handed off to AI, in my opinion. Human-in-the-loop will always be critical.

Consistency extends beyond screening. AI-generated transcript summaries help interviewers align on what candidates said in a conversation. It’s a powerful way to overcome recall bias, where we remember the details that confirm what we already thought about a candidate, and forget the ones that don't.

For anyone concerned about privacy, recording is an optional feature. Candidates are notified before it takes place and can choose to opt out.

None of this makes AI a silver bullet. A biased job brief will produce biased matching, and poorly defined criteria will produce inconsistent results. Artificial intelligence is only as fair as the process it sits inside.

Deel HR

Scale is where good hiring intentions break down

Scale is one variable that really makes the case for structured hiring. It’s easy to be fair and consistent when you're hiring one person at a time, and much harder when you’re hiring across dozens of countries simultaneously.

Deel's 2025 State of Global Hiring Report puts the scale of the hiring challenge into context. Among top-funded startups alone, more than 1,400 cross-border employees were hired in 2025, with the UK, Canada, and Germany absorbing the largest share.

The most common roles, software developers, sales managers, and business developers, are precisely the high-volume categories where candidate evaluation is most susceptible to the inconsistencies I described earlier. The more applications you're processing, the more opportunities there are for bias to creep in through fatigue and vibe-first decisions.

The fastest-growing role on Deel’s platform is AI trainer positions, which grew 283% cross-border in 2025. Recruiters are tasked with filling these new role categories at speed, often without established hiring playbooks or agreed evaluation criteria. When there's no template for what good looks like, the risk of inconsistent (and therefore biased) decision-making is highest.

Compliance is a shared responsibility

Hiring across borders at this pace creates legal and compliance risk, too. The same structural weaknesses that allow bias to flourish also leave you exposed when hiring decisions are scrutinized across different legal jurisdictions. Structured tooling becomes the only way to grow without those risks growing alongside you.

But it’s worth remembering that no tool can make you compliant with regulations like GDPR and CCPA, equal employment requirements, or local labor laws by default. Compliance is a shared responsibility between your team and the systems you use.

A good ATS can support your compliance requirements, but only if you first configure the controls to suit your context. For example, you might create custom permissions and approval flows that create an audit trail proving the right people are involved in the right decisions at the right time.

This is vital when you’re in multiple countries simultaneously. You likely can’t rely on someone remembering the local rules in every jurisdiction. But you can use applicant tracking systems with built-in salary benchmarks and compliant contract generation to provide market consistency.

The best hiring teams overcome bias with good systems

I've never met a hiring manager who set out to be biased. Most people in recruitment genuinely want to find the best person for the job and give every candidate their shot. But good intentions, without the right process to back them up, only get you so far.

For companies scaling their global hiring process today, the stakes are higher than ever. More countries, more roles, more candidates struggling to find work, more complexity, and more opportunities for inconsistency to become something that looks a lot like systemic bias. The only realistic response to that is to develop a better process. Structure isn't the enemy of human judgment; it's what makes human judgment more trustworthy.

At Deel, we create global hiring solutions that work with your team and not against them. To see how Deel’s ATS supports fairer, more consistent hiring, book your demo today.

Live Demo

Get a live walkthrough of the Deel platform

Alan Price serves as the Director of Talent Acquisition at Deel, overseeing talent acquisition teams in the US, LATAM, EMEA, and APAC regions. Before joining Deel, Alan was a founding member of the micro-mobility company Dott, where he held the position of Vice President of People. Prior to his role at Dott, he held senior positions at Uber and Google.